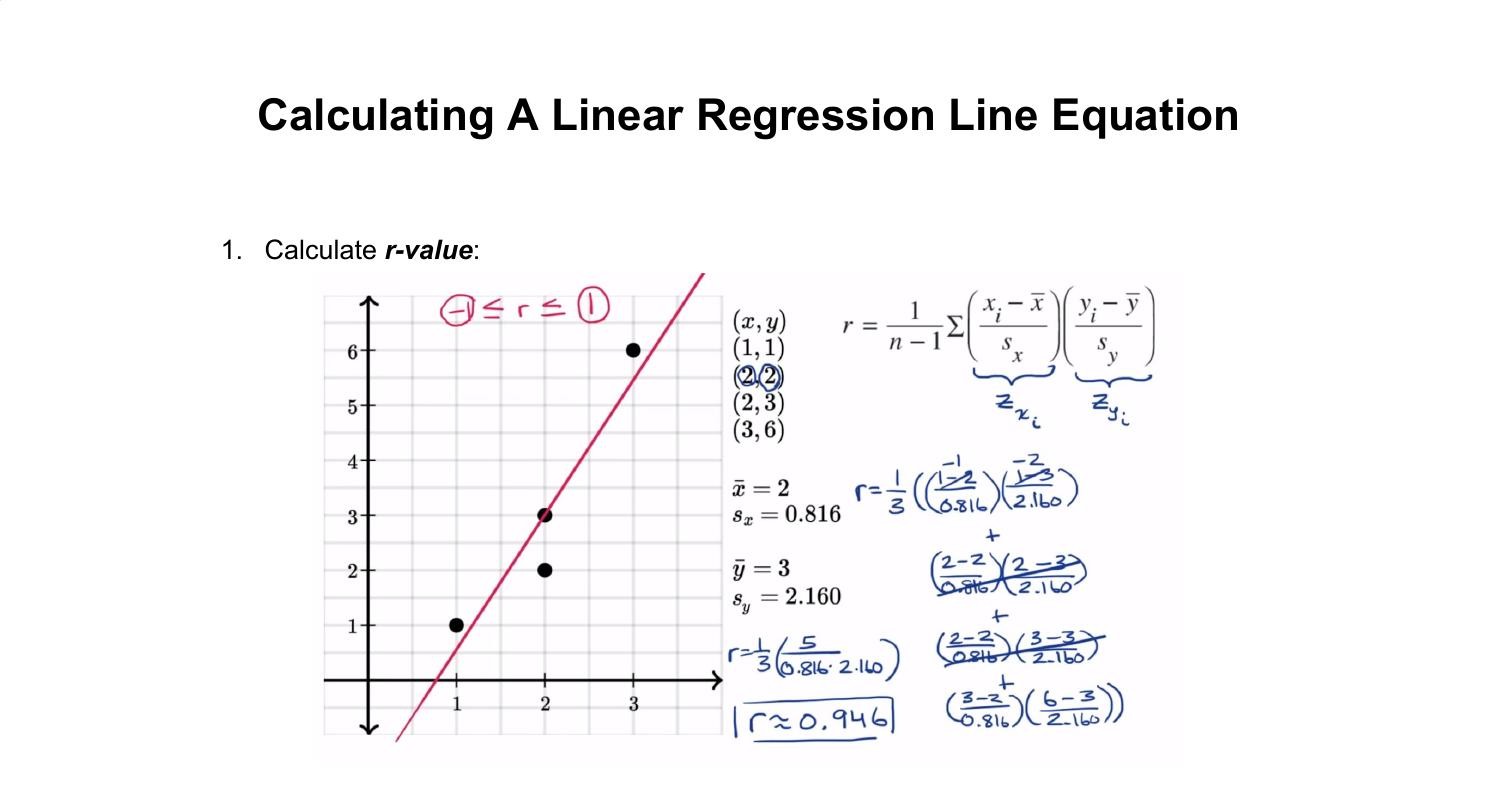

The nth error is going toīe yn minus m xn plus b. Going to go n spaces, or n points I should say. So this error right here, orĮrror one we could call it, is y1 minus m x1 plus b. So let me define the squaredĮrror against this line as being equal to the sum of Points, each of these n points on the line. Minimize the square of the error between each of these Take the straight up sum of the errors, we could just So that's literally going toīe equal to m x1 plus b. Of the line and you're going to get this point One, if you think about it, it is this value right The way to the endpoint between the y value of Value of this point and the y value of the line. It would be the verticalĭistance between that point and the line. So for each of these points, theĮrror between it and the line is the vertical distance. What the the topic of the next few videos are going to be, I This is the slope on the line,Īnd this is the y-intercept. Minimize this squared error from each of these points

Line that kind of approximates what these points are doing. But what I want to do is findĪ line that minimizes the squared distances to theseĭifferent points. And then let's say I haveĪnother point over here. Visualization, I'll draw them all in the first quadarant. And they all don't have toīe in the first quadrant. So what we're going to thinkĪbout here is, let's say we have n points on aĬoordinate plane. Just get the formula that we're going to derive.

You in some way, you don't have to watch it. So if any of that soundsĭaunting, or sounds like something that will discourage We're going to have to do aįew partial derivatives. Have to do a little bit of calculus near the end. To embark on something that will just result inĪ formula that's pretty straightforward to apply. the predicted value for Y, according to our regression line, is 5.3+9.5(3)=33.8 but the TRUE regression line (which we won't actually have) would have predicted 35 for Y so, our ERROR is 30-35=5, but our RESIDUAL is 30-33.8=3.8

Lets say one of our randomly distributed observations were (3,30). We might actually get something like Y=5.3+9.5X. Now, if for observation, we had some normally distributed error, and we fitted a line to that data, we might get a line that is reaaallly close to Y=5+10X but we probably would not get that exactly. If you had a function Y=5+10X as your TRUE regression and say we had 5 different possible inputs for x ( 1,2,3,4,5) now, for x=1, y=15 and for x=2, y=25 etc. You can see this if you simulate some data in a spreadsheet. Here is an example that hopefully won't confuse. When we fit a regression line, we make the sum of our residuals equal to 0 but that does not necessarily mean that the sum of our error is 0 (there will always be some error in statistics by its nature). The difference between your point and the ESTIMATED regression line is your residual. The difference between the point and the TRUE regression line is your error. The difference is that there is (in theory) a TRUE regression line which we will never know, and then there is the one that we estimate to be the regression line.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed